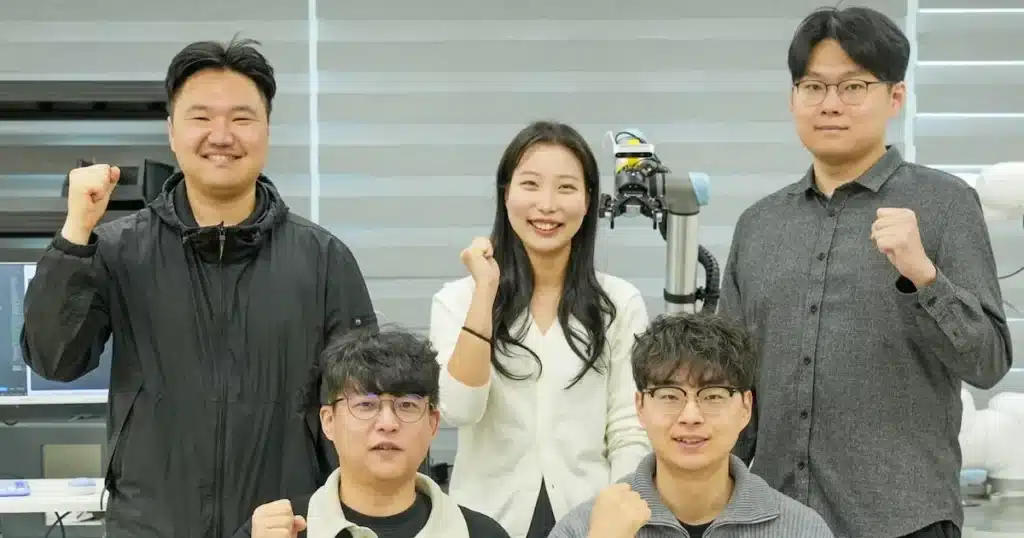

Researchers at the Korea Electrotechnology Research Institute (KERI) have introduced a groundbreaking AI system that enables robots to interpret human speech, discern intent, and execute complex tasks autonomously. This innovation targets vertical factory environments, particularly in semiconductor production, where space constraints and precision demands pose significant challenges.

Autonomous Agentic AI: A New Era for Robotics

The newly developed technology, known as agentic AI, empowers robots to go beyond rigid, pre-programmed instructions. Traditional robots require manual code adjustments for every new part or process change, leading to substantial errors and time losses in manufacturing hubs. In contrast, this AI leverages large language models (LLMs) to comprehend verbal directives and devise optimal workflows independently.

Developed in collaboration with a major national corporate research institute and public organizations, the system features a multi-agent architecture. A primary agent receives task assignments from factory supervisors. It then delegates to specialized sub-agents: one for semantic analysis of commands and another for precise robot control, ensuring seamless coordination.

Solving Grounding Challenges for Real-World Accuracy

Addressing a critical limitation in prior AI robots—known as “grounding,” or linking abstract instructions to physical reality—the new system integrates camera data. Robots now scan the 3D workspace post-command, generating accurate coordinates for actions. This transforms them into “actionable AI,” capable of reliable performance in dynamic settings.

Key Advantages in Manufacturing Efficiency

The core strengths lie in its speed and error reduction. Verbal instructions alone trigger end-to-end execution within seconds, eliminating repetitive coding. This proves invaluable for mid-sized enterprises, where a single mistake incurs massive costs in materials and downtime. Operators report heightened safety, as robots handle repetitive, hazardous semiconductor tasks autonomously.

Currently termed Vision-Language-Action (VLA) models, similar pursuits by global tech giants like OpenAI, Nvidia, and Tesla often demand vast data and hardware. KERI’s modular design adapts readily to factory floors, achieving top-tier field performance and deployment potential.

“Mid-sized manufacturing operations face high costs and error risks with AI adoption. This technology will elevate their global competitiveness,” stated Lee Joo-kyung, director of KERI’s research park.

The project aligns with the government’s “Global K-College 30” initiative, fostering AI talent integration into industry for sustained innovation.